Role

Designed the rules, lifecycle, agent roles, launcher, dashboard flow, and the transition from v1 experiments into a structured v2 system.

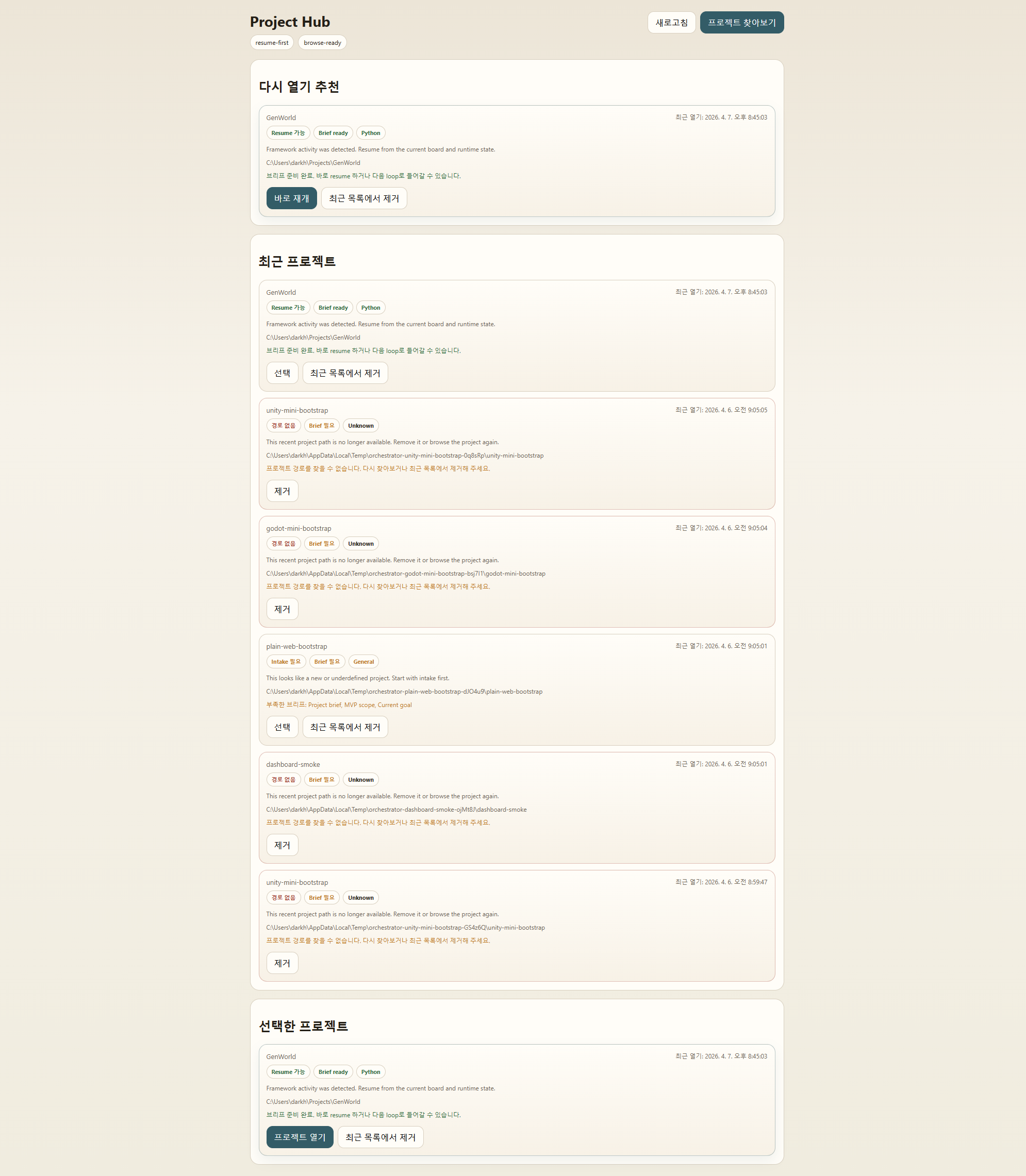

A reusable framework that turns role-based AI collaboration, review rules, and operating flow into an explicit system instead of repeating prompt structure from scratch on every project.

Designed the rules, lifecycle, agent roles, launcher, dashboard flow, and the transition from v1 experiments into a structured v2 system.

AI collaboration kept requiring the same role split, review steps, and failure rules to be rebuilt on every project.

A reusable framework with 4 agents, 66 tests, and 119 feature definitions tied to a launcher and dashboard structure.

This is evidence that I can move AI work from experimentation into repeatable operating structure.

Version 1 proved the operating idea with Bash and Markdown. Version 2 turned that experience into a TypeScript component model with event-driven structure and clearer ownership boundaries.

The important point is not the agent count alone. It is that the workflow now carries rules for review, rejection, escalation, and launch flow instead of leaving those decisions implicit.